Validated twice. Two completely different domains.

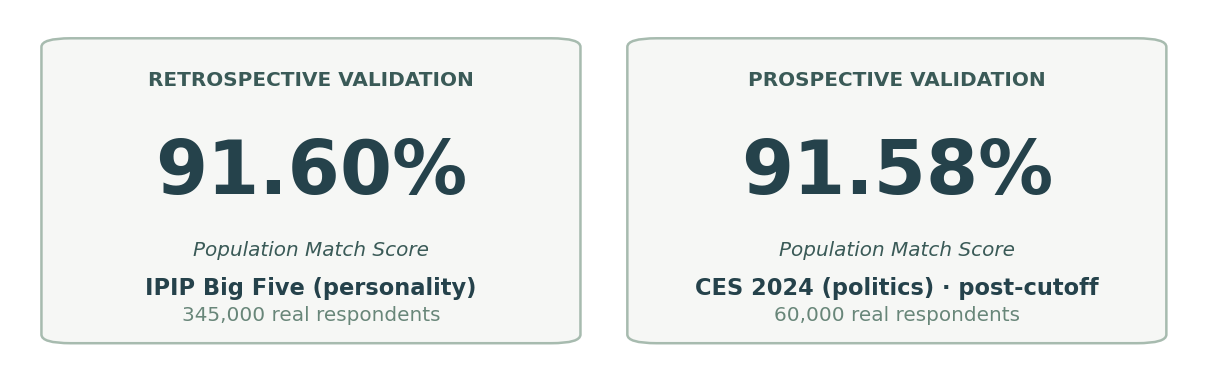

Two independent studies. Both at 91% Population Match Score.

We didn't test once. We tested in two completely different ways. First a retrospective study against a personality inventory the model could have seen during training, the strict scientific benchmark. Then a prospective study against a political survey published after our model's training cutoff, a leakage-resistant test of true predictive power. Both landed at roughly 91% Population Match Score.

First, a quick primer · how the tool works

1

Build the people

We generate synthetic personas from public population distributions: U.S. Census demographics for age, region, education and income, plus published personality and value profiles. Each persona has a complete, internally consistent identity.

2

Ask them like real respondents

When you put a message, a poll, or a script in front of them, each persona reacts in their own voice, shaped by their personality, their life context, and the local culture they live in. The same persona stays consistent across every question.

3

Verify against reality

To make sure the personas actually behave like real humans, we run published surveys through them and compare the answer distributions to the real respondents. Below are two of those tests.

Headline · Population Match Score

Population Match Score = 100 × (1 − |synthetic mean − real mean| / scale range), averaged across items. A score of 100 means our synthetic personas' average answer is identical to real respondents'; 50 means our error is half the scale. We chose this metric over R² because it stays interpretable across rating scales (1-5 personality, 1-7 ideology, 0-100 thermometers) and answers a plain question: how close are we to real people, on the scale the researcher used?

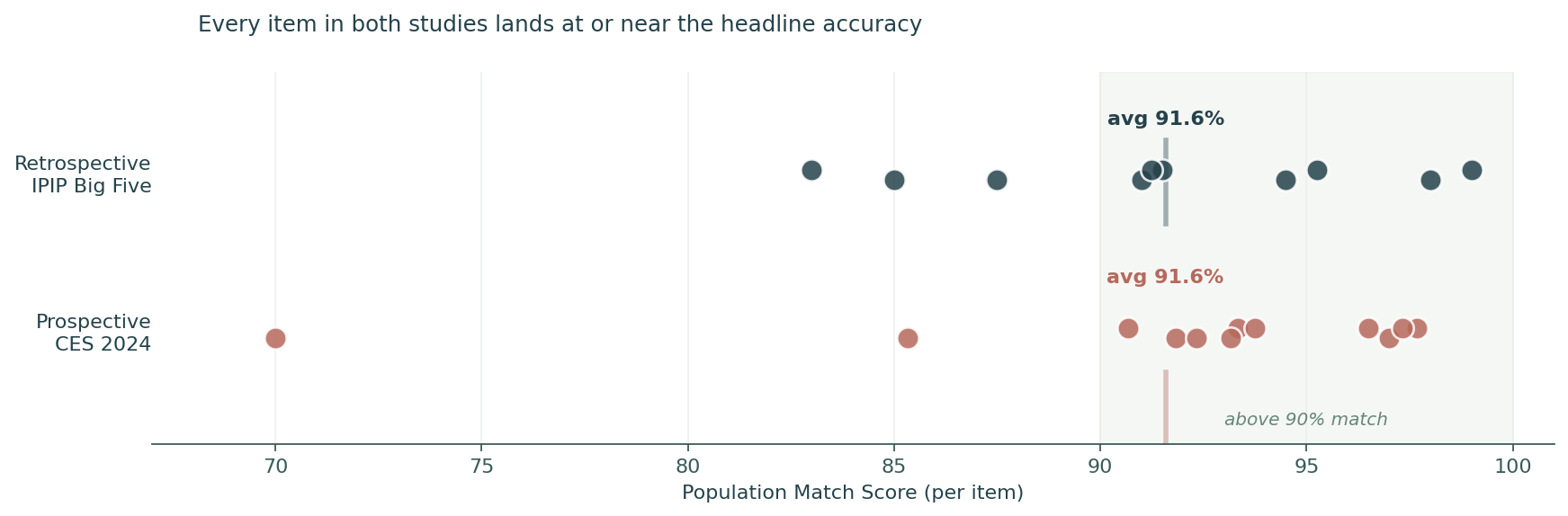

Per-item breakdown

Every dot is one item. The shaded zone marks items above 90% match. The headline is not an average over wild swings. Both studies have their bulk of items clustered between 87% and 99% match, on the same scale, against completely different real-world populations.

Study 1 · RetrospectivePersonality · IPIP Big Five

Personality test, against 345,000 real respondents

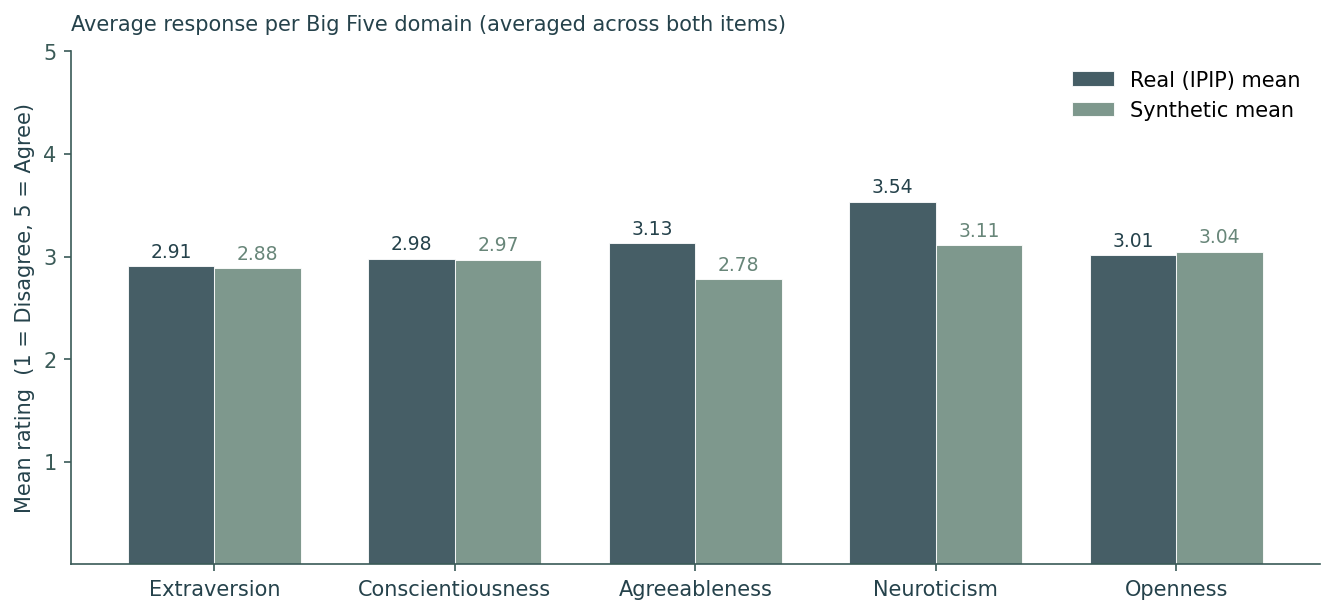

The Big Five (OCEAN) is the most replicated personality instrument in psychology. We ran 500 synthetic personas through the standard 10-item version on a 1-to-5 agreement scale, then compared their distributions to 345,443 real Americans from the Open Psychometrics dataset. 91.60% Population Match Score. The synthetic mean lands within roughly a third of a scale point of the real mean on a typical item.

Honest caveat: this dataset has been on the public web since 2018 and was likely in our model's training data. It's the strict benchmark, not the leakage-resistant one. That's why we ran a second study (below).

Per Big Five trait

Synthetic personas reproduce the real population's average score on every Big Five trait. Side-by-side bars show the synthetic mean (lighter) next to the real mean (darker) for each trait.

Study 2 · ProspectivePolitics · CES 2024 · post-cutoff

Then we ran a study our model has never seen

To rule out memorization, we ran the same kind of comparison against the Cooperative Election Study 2024 Common Content: 60,000 American adults, fielded by YouGov for Harvard, first released on Harvard Dataverse on April 3, 2025, after our model's training cutoff. Twelve numeric items (job approval, ideology ratings, self-rated ideology) were pre-registered before the run.

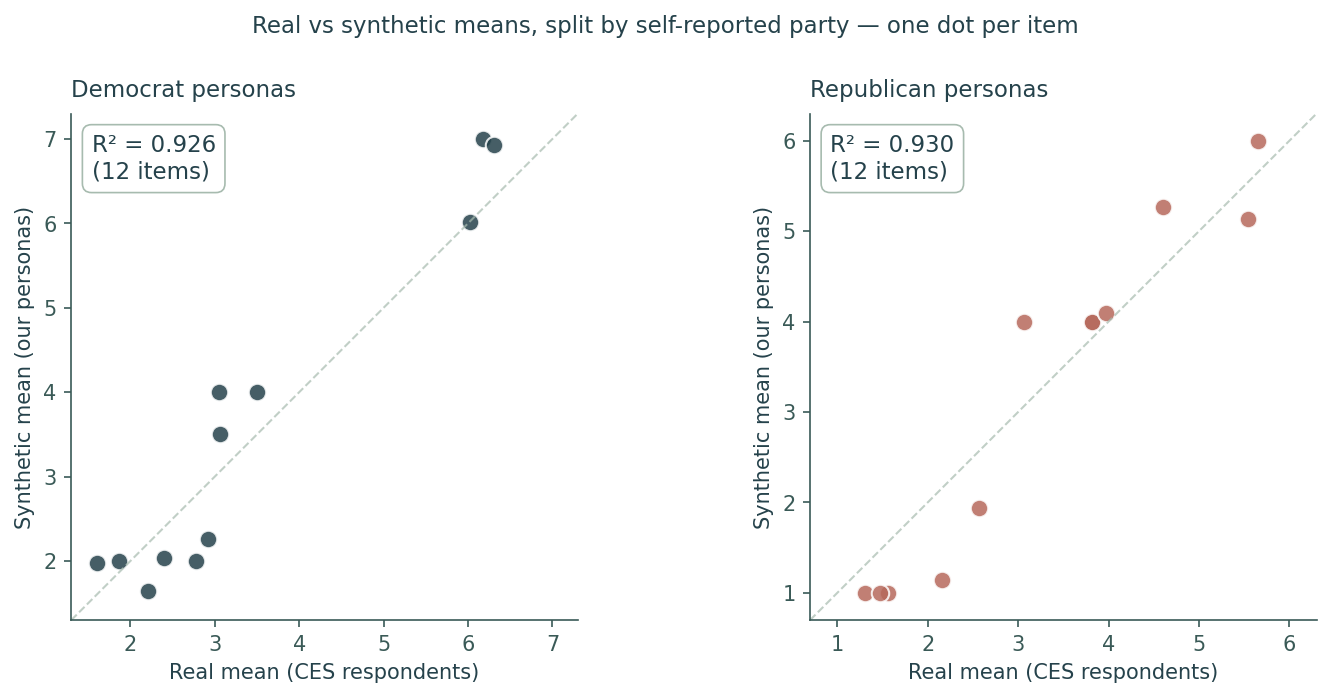

Result: 91.58% Population Match Score, within a tenth of a percentage point of the retrospective IPIP run, on data our model could not have memorized. Ten of twelve items within half a scale point; all twelve within one. When we split the personas by political party and check whether our Democrats look like real Democrats and our Republicans look like real Republicans, we get 88.35% within-cohort match. A model that had only memorized population averages would fail the within-cohort test by construction.

The receipt

Each dot is one CES item. Left: Democrat personas predicting the Democrat real mean. Right: Republicans predicting Republicans. Both panels hug the diagonal. Our personas reproduce the partisan structure of the response, not just the population average. CES Common Content DOI: 10.7910/DVN/X11EP6 · first released 2025-04-03 · model cutoff: Q1 2025.

What this does and doesn't prove: the personas reproduce the central tendency of real Republican and real Democrat ratings on items the model couldn't have memorized. Distribution shape (variance on bimodal partisan items) is still narrower than the real distribution. We surface this as the next open problem rather than hide it.

What this means

Two independent validations on completely different real-world populations both land at roughly 91% Population Match Score. One study the model could have memorized. One it couldn't. Both measurements landed in the same band. That's evidence our personas model people, not memorized distributions.

For the technically minded: the literal scores are 91.60% and 91.58%, within the sampling noise of either study at this size. The magnitude is the signal, not the third digit.

Synthetic people are a strong first signal. Pair with real-world research for high-stakes decisions. Methodology and per-item gaps are surfaced openly above. A copy of the validation report is available on request.